Any links to online stores should be assumed to be affiliates. The company or PR agency provides all or most review samples. They have no control over my content, and I provide my honest opinion.

With the launch of HarmonyOS on the Honor Vision & Vision Pro TVs, I thought it was worth looking into the chipsets that power these devices.

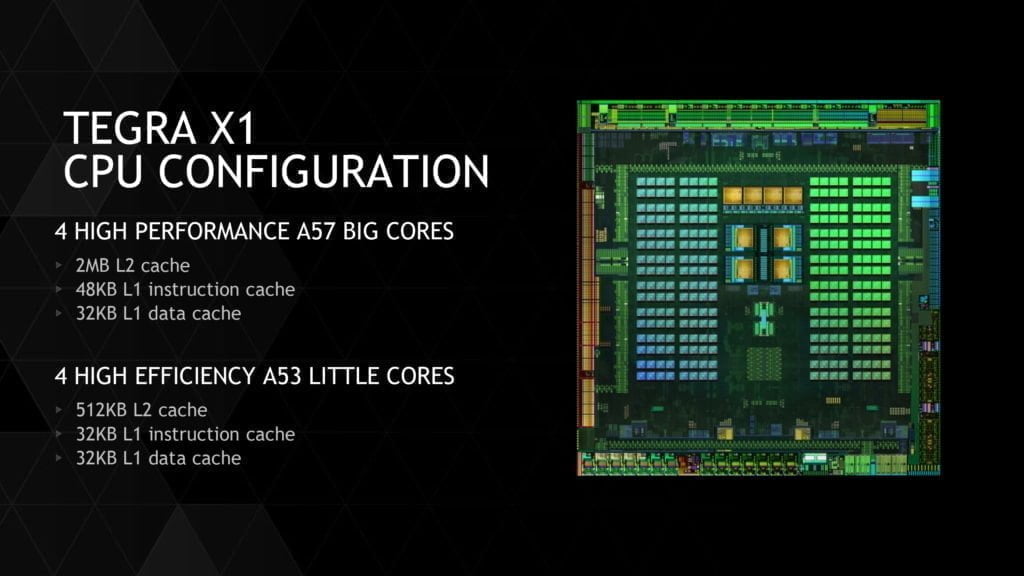

With 4K HDR playback available on most TVs you would expect something relatively beefy powering the underlying SmartOS. Flagship mobiles are currently using the 7nm fabrication process, but most TVs and set-top boxes are using chipsets that are built around a 28nm process, and the Tegra X1 which is often regarded as the most powerful chipset for Android-based set-top boxes is manufactured on 20nm process, soon to be upgraded to 16nm.

The new Huawei Honghu 818 SoC also follows suit using that rather large 28nm process, but it has a specification far higher than chipsets found on TVs that cost several thousands of pounds.

Its quad-core design uses two ARM Cortex-A72 with two ARM Cortex-A53 combined with a Mali-G51 GPU with a 600MHz clock speed, and 2GB RAM. You might think that this is nothing to get too excited about, but it is sadly much better specced than the competition.

The Nvidia Tegra X1 used in the Nvidia Shield TV has 2x Cortex-A57 + 2x Cortex-A53, though the Maxwell-based 256 core GPU is one of the things that makes it stand head and shoulders above its competition.

The MediaTek MT5596 which is featured in many TVs that run Android including Sony, Philips, TCL, and others. It was used on the Sony AF8 which I own and is listed on the Sony website for £2,299.00 for the 55-inch model. They have a 4K HDR Processor X1 as the picture processor, but it is the MT5597 doing the day to day activities.

The MT5596 is a quad-core 1.1Ghz Arm Cortex-A53 CPU with a Mali-T860 MP2 built on the 28 nm fabrication process and dates back to 2016.

The MT 5596 supports both HEVC and VP9 Ultra HD codecs and 10-bit video decoder. The SoC can decode Quad Full HD 60 fps video streams which can be handy if you decide to record multiple channels via a DVR feature.

This year they have finally updated the chipset to the MT5597 which still uses the quad-core Arm Cortex-A53 and apparently is lower clocked at 1.0Ghz and uses an unnamed AMR Mali GPU.

It is claimed that this is around 50% faster than the previous generation, though I struggle to see what it is that generates this improvement.

The Amlogic S905X which is found on many set-top Android boxes such as the Xiaomi Mi Box uses a Quad-core Cortex-A53 CPU with Mali-450 GPU made on the 28nm fabrication process.

If we look at the Coretex-A72 versus A53. The Cortex-A72 should perform considerably better as it makes use of a 3-way superscalar instead of the 2 way of A53. It also makes use of out of order execution for more efficient use of the instruction cycles as the instructions are queued when the necessary data is unavailable which prevents the stalls which are generally present at in-order processors due to unavailable data. There have also been mentions of the improved power efficiency in the A72 due to improvements in the decode/dispatch stages of the processor.

The Coretex-A72 vs the A57 on the Tegra X1 is actually very similarly specced, looking at the ARMv8-A cores on Wikipedia reveals almost no differences.

A comparison of GPUs is a little harder, in particular, the Nvidia uses a Maxwell GPU which is much more powerful than many alternatives. This offers the same features as the laptop and desktop Maxwell-based products like OpenGL 4.5, CUDA 6.0, OpenGL ES 3.1 and DirectX 11.2. The GPU offers.

256 shader cores (2 SMMs) and clocks at up to 1000 MHz. The memory interface offers a maximum bandwidth of 25.6 GB/s (2x 32 Bit LPDDR4-3200). In 3DMark running Ice Storm, this achieved a score of around 56K.

The Honghu 818 Mali-G51 GPU, it doesn’t state the number of processors but the Kirin 710 uses ARM Mali-G51 MP4. In 3DMark running Ice Storm, this achieved a score of around 20K, so less than half the Maxwell GPU.

The Mali-T860 MP2 on the MT5596 receives just 9.5k so less than half again.

Overall the Huawei Honghu 818 SoC looks very well specced for a chipset on a TV costing less than £600.

I am James, a UK-based tech enthusiast and the Editor and Owner of Mighty Gadget, which I’ve proudly run since 2007. Passionate about all things technology, my expertise spans from computers and networking to mobile, wearables, and smart home devices.

As a fitness fanatic who loves running and cycling, I also have a keen interest in fitness-related technology, and I take every opportunity to cover this niche on my blog. My diverse interests allow me to bring a unique perspective to tech blogging, merging lifestyle, fitness, and the latest tech trends.

In my academic pursuits, I earned a BSc in Information Systems Design from UCLAN, before advancing my learning with a Master’s Degree in Computing. This advanced study also included Cisco CCNA accreditation, further demonstrating my commitment to understanding and staying ahead of the technology curve.

I’m proud to share that Vuelio has consistently ranked Mighty Gadget as one of the top technology blogs in the UK. With my dedication to technology and drive to share my insights, I aim to continue providing my readers with engaging and informative content.